Approaches to Extremely Reliable Power

Facilities can evaluate and optimize power quality solutions for improved reliability

The digital revolution of the past decade has increased the demand for electric power with higher reliability and quality levels than is typically delivered via the conventional centralized power system. As modern economies move into the 21st century, this requirement for high quality, reliability, and availability (QRA) of an electric power supply is expected to continue to increase as more manufacturing processes and service industries become dependent on digital-quality power for their sensitive digital devices.

From a service interruption perspective, conventional bulk or centralized power supply systems deliver power with reliability in the range of 99.0% to 99.9999%. This is also referred to as “two nines” to “six nines,” respectively. Those same systems exhibited average reliability levels of about three to four nines, or 99.9% to 99.99%. However, these reliability levels don't consider a different class of power quality disturbances. With potentially disruptive short-duration power quality disturbances — especially voltage sags and momentary interruptions — the availability of disruption-free power can be one or two orders of magnitude worse than a more standard interruption-based availability index.

Therefore, it's important to tailor the technical solution to the level of power quality needed by the end-use equipment. For example, how sensitive is the equipment to short-duration power quality disturbances? Evaluating and optimizing power quality solutions is possible with a three-step process.

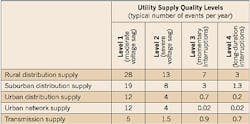

Step 1. Your first step is to define the level of power quality you need for your facility. Defining power quality levels helps characterize the power system quality, and conversely, helps define what level of power quality is needed from the power delivery system, including the utility and on-site generation equipment. Four generic levels of power quality are listed below.-

Level 1 — Moderate voltage sags, ITIC (CBEMA) curve

-

Level 2 — Severe voltage sags

-

Level 3 — Momentary power interruptions

-

Level 4 — Long-duration power interruptions

Power quality levels provide more than just a way to characterize events. If the industry can establish a standardized hierarchy, much more is possible. Vendors can claim a certain power quality level for their equipment. End-users can specify their need for a device with a certain ride-through rating. For example, the SEMI F47 provides a standard for semiconductor processing equipment voltage sag immunity. Utilities can advertise — or guarantee — certain levels of power quality that are more meaningful than System Average Interruption Duration Index (SAIDI) or System Average Interruption Frequency Index (SAIFI). Together, these effects could lead to less downtime for end-use equipment.

Step 2. The next part of the analysis is quantifying the existing reliability of components and systems. This requires two forms of information. Equipment reliability data. This should include data for both utility-side and facility-side systems. It can then be used to calculate the mean time between failure (MTBF).A complete analysis for selecting the optimal configurations includes the following steps:

-

Find the MTBF of each system configuration. For example, a system configuration may be the addition of special power quality mitigation equipment installed at the facility level or upstream at the distribution level. Redundant systems are discussed in the following section.

-

Estimate the annual equivalent cost for each configuration.

-

For each configuration, find the annual outage cost based on the MTBF.

-

Rank each scenario based on the total annual cost, which is the sum of the configuration costs and the outage costs.

When designing a redundant system, you must consider two items.

Physical location. Limit sharing of physical space as much as possible. If multiple utility sources are used, try to use circuits that don't share the same rights-of-way. Use circuits that originate out of different distribution substations. On the local system, physically separate backup/online generators from each other if there are redundant generators, and from the incoming utility supply. Maintenance. Coordinate maintenance as much as possible to limit the loss of redundancy during the process. Coordinate utility and facility maintenance whenever possible so they don't overlap, and try to avoid maintenance during stormy weather because the chances of a failure on the utility side are much higher.Hidden failures are also important. Consider a UPS with dead batteries. If a voltage sag or interruption occurs and the UPS attempts to run off the batteries but can't because the batteries are fully discharged, a load interruption will occur. Hidden failures are difficult to track down. In a highly redundant system, the redundancy can mask failures. Take the following steps to reduce the number of such events.

Testing. Set up a testing schedule for all equipment that's relied upon to provide support during failures. This should include all critical relays, controls, UPSs, backup generators, and transfer switches. Maintenance. Ensure that all UPSs, generators, and other local equipment are properly maintained. Diagnostics. Implement self-diagnostics and alarming on relays and other devices to reveal hidden failures. Loadings. Review loadings periodically to ensure that overloads aren't reducing redundancy.Don't forget the human factor. Whether due to human error or malicious intent, many failures in redundant systems can be traced back to people. Consider human design factors in any redundant design.

Applications in the real world. In many ways, data centers are considered great utility loads because they have very high load densities and high load factors. Such facilities can have load densities of 25W/ft 2 to 50W/ft 2 or more and demand very high quality power. As a result, they typically have redundant UPS systems and redundant generators.In several case studies conducted by EPRI-PEAC, care was taken to first analyze the performance of each option versus the generalized cost of potential solutions (Fig. 2). Then, solutions are ranked based on overall cost effectiveness. These studies yielded the following generalities that were common to all:

-

One size doesn't fit all. The optimal solution depends on many factors, including facility size, the convenience of a second utility feed, cost of facility interruptions, and the sensitivity and types of facility equipment.

-

Some facilities are willing to pay much more than the sum of the outage cost and the configuration cost for better performance — including more redundancy and less chance of failure — than their actual outage costs.

-

Load ride-through enhancements effectively increase performance in many situations for a relatively small cost.

-

The utility-side options are most suitable for large commercial and industrial users.

-

Distributed generation options look potentially viable for many high-performance needs if the utility is present as a backup.

The best system for a given facility will be location-dependent, based upon the characteristics of the existing bulk power system and nature of the load at the site. Locations with more reliable bulk supply systems, such as urban areas where networks could be used, will need to rely less on customer-side equipment to reach high levels of performance. Locations with inadequate reliability, such as rural areas where radial distribution is used, will need to place more dependence, and, hence, investment in customer-side solutions, such as distributed generation and UPS-type equipment. In some cases, it may even be desirable to have an off-grid solution that's totally dependent on distributed generation and customer-side solutions.

Howard is the president of EPRI-PEAC Corp., Knoxville, Tenn. Short and Maitra are both senior consulting engineers for EPRI-PEAC Corp.