IEEE is now adopting the standardized flickermeter, which will eliminate confusion about how to evaluate lighting flicker levels.

Lighting has a high sensitivity to voltage variations — fluctuations in the rms voltage of only .25% are sufficient to cause noticeable flicker in light bulbs. The sensitivity is dependent on the frequency and magnitude of the voltage fluctuations. The term “flicker” usually refers to the light fluctuations that occur with the varying voltage to a light bulb or to the voltage fluctuations themselves. International standards have been developed for characterizing the voltage fluctuations based on the potential effects on lighting and the human perception of the lighting variations.

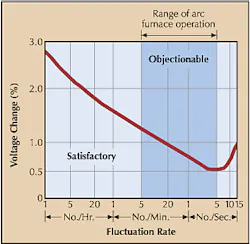

Sensitivity curves for incandescent lighting show how the voltage fluctuations can cause unacceptable variations in light output. The curve shown in Fig. 1 above is one example of a flicker sensitivity curve for an arc furnace load. It's based on the response of the human eye and brain to variations in the light output from a 60W incandescent bulb caused by fluctuations in the supply voltage.

International standards groups have based their definition of flicker on the characteristics of a test meter that incorporates weighting functions for the sensitivity of the light bulb and the human eye-brain response to light variations. The International Electrotechnical Committee (IEC) standardized this meter in Standard 61000-4-15. Output from the flicker meter consists of two basic quantities:

Pst represents short-term flicker severity, which is a per-unitized quantity where one per unit represents a flicker severity that should correspond approximately to perceptible flicker from 60W incandescent lights. A Pst value is obtained every 10 min. There are 144 Pst samples each day.

Plt represents the long-term flicker severity. Each Plt value is calculated from 12 successive Pst values using this formula:

The flicker levels from the meter are characterized in a statistical manner. Fig. 3 shows a chart of the Pst values measured over a 24-hr period at the point of common coupling (PCC) with a steel plant. It shows the varying nature of the flicker associated with arc furnaces and other loads in the plant. An example index that could be used to characterize the flicker would be the level of flicker that hasn't exceeded 99% (Pst PD99%). Usually, measurements should be made over a one-week period to adequately characterize the statistical nature of the flicker.

The original flickermeter design was based on the effects of voltage fluctuations on a 60W incandescent light on 230V systems. A light bulb designed for 120V isn't as sensitive to the same voltage fluctuations because the filament is larger, which yields a longer time constant, to handle the higher current levels associated with the same wattage.

To adopt the flickermeter standard in North America, as IEEE Task Force 1453 recently did, an additional weighting curve was developed for 120V applications, which are more common in North America. The 120V and 230V weighting curves are compared in Fig. 4. Most flickermeters now offer evaluation using either of these curves. Make sure you know which one is being used because the difference in flicker levels is about 25%.

Many monitoring equipment manufacturers have implemented the flickermeter design that's specified in IEC 61000-4-15 and will be adopted in IEEE 1453.

Setting limits for flicker levels. The flickermeter provides a convenient and standardized method of evaluating flicker levels. You can use it to implement flicker limits at your facility. A reasonable goal should be for the Pst levels to be less than 1.0 for 95% of the time. You should try to establish a planning limit at the 99% probability level to provide some margin with respect to the actual limit. Sometimes you can relax this to a limit of 1.0 for 99% of the time if you know that no other flicker-causing loads are in the vicinity.Fig. 5 illustrates the flicker measurements at the PCC at a facility equipped with an arc furnace. You can clearly see the individual melt cycles and determine the background flicker levels from the periods in between melt cycles. This allows evaluation of the flicker caused by the arc furnace and evaluation with respect to a predetermined limit.

If your flicker limits are excessive, it's usually your responsibility to implement systems to control the load variations causing the fluctuations. The most common solution is a static var system that can respond quickly to the changing loads and provide compensation accordingly.

McGranaghan is vice president of Electrotek Concepts, Arlington, Va.