BICSI Field Report: 2010 Fall Conference

Although the 4,000 attendees at the BICSI Fall Conference, held Sept. 12-15 at the MGM Grand Hotel & Convention Center in Las Vegas, showed up with new business opportunity and restricted budget issues on their minds, they were still able to focus on the emerging technologies being discussed and learn about new cabling products and hardware that can support the next generation of networks. IP convergence, which allows various types of devices to be attached to a network designed for Ethernet data and voice applications, is bringing new thinking regarding networks and cabling. Originally developed to address data and voice applications in a specific environment (e.g., office spaces), the 568 telecom standard does not provide flexibility for surveillance camera applications, as an example, thus allowing the study of other options. The Ethernet protocol has been the backbone of network design. Now with the use of Power over Ethernet (PoE) technology, the cabling also carries DC electrical power, allowing for the market growth of video surveillance cameras, wireless access points, and other applications. PoE developers are also addressing the need for higher wattage delivery by placing current on all four pairs of the cable.

While copper cable is being pushed to its limits for achieving higher speeds in data centers and storage area networks, multimode fiber systems continue to provide a cost-effective cabling solution for data centers, local area networks, and other applications. Compared to single mode fiber, multimode systems offer significantly lower costs for transceivers, connectors, and connector installation while meeting the bandwidth and other engineering requirements.

Cooling is another critical issue. The rising cost of powering the servers and air conditioners needed to keep equipment within thermal specs continues to be a major challenge, as the data center is being redefined by technology developments. Industry surveys estimate that cooling accounts for up to 40% of a data center's total energy load. However, new power control technology within the blade servers and methods, such as voltage regulation, variable-speed fans, and other techniques, are helping IT professionals keep these costs under control. Most data centers use a hot aisle/cold aisle configuration to help contain hot air emerging from the back of the equipment. Many industry seminars focused attention on the numerous opportunities for passive cooling in the vicinity of the cabling and at the equipment racks to enhance the hot/aisle, cold aisle layout.

On the conference presentation front, speakers of more than 20 educational sessions covered topics ranging from PoE to data center efficiency to electronic safety and security.

In "The Ins, the Outs, and the Bends of OM-4 Fibers for Enterprise Networks," Tony Irujo of OFS, Sturbridge, Mass., discussed the latest developments in multimode optical fiber, specifically OM-4, 50 micron multimode fiber, which has become the transmission medium of choice. Today, as data rates surpass 10 Gbps and lasers have replaced LEDs, the 62.5 micron fiber construction has reached its performance limit. The 50 micron fiber offers ten times the bandwidth of the 62.5 fiber. Additionally, for next generation 40 Gbps and 100 Gbps Ethernet, only OM3 and OM4 fibers are cited in the draft standard.

In "40 Gbps Over Twisted-Pair Cabling: Planning for a New Ethernet Application," Valerie Maguire of Siemon, Watertown, Conn., and Todd Harpel of Berk-Tek, New Holland, Pa., described the usefulness of copper cabling for 10 Gbps and higher speeds in the data center. About 92% of the channels in a typical data center fall between 30 m and 50 m. Thus, copper can serve 40 Gbps Ethernet applications, using a fully shielded Cat. 7A cable construction for distances less than 100 m. A likely starting point is to satisfy the short-term needs (three to five years) by specifying 40 Gbps Ethernet on fully shielded cabling and adopt a short-length cabling topology. For example, a cable manufacturer recently introduced a special UTP construction that is not exactly a Cat. 6A cable, but it can support 10 GBase-T up to a maximum distance of 60 m. With a designation of "LD," for "limited distance," it meets all the requirements for Cat. 6A component compliance in TIA-568-C-2 — at that distance.

In "Power over Ethernet and Fiber Networks is Not an Oxymoron," Ty Estes of Omnitron Systems Technology, Inc., Irvine, Calif., showed how PoE — which can remotely power IP cameras, Wi-Fi access points, and other devices — does not have to be restricted to the 100-m limitation of horizontal UTP cabling. A network circuit can be greatly extended with the use of optical fiber cable that connects to a media converter and also a local source of AC or DC power. At that location, Cat. 5 cabling distributes both low-voltage power and the network connection to the powered device. In addition to extending the practical distance of the system, this arrangement offers higher bandwidth (i.e., capacity), better security, and longer useful life.

In "Data Centers in a Green World — A High-Powered Paradox!," Robert McFarlane of Shen, Milsom & Wilke, LLC, New York, N.Y., focused on the need for data centers to be more energy efficient. Examples of how improvements can be achieved (e.g., minimize fan usage by using electronically commuted (EC) motors and variable-frequency drives, follow good raised floor design with cable and piping parallel to air flow, use tight joint and edge seals and cable penetration seals, and place grates and perforated tiles to direct the cool air at the equipment) helped drive this point home. Because operational inefficiencies have often been reflected in an oversizing of the power and cooling systems, newer cooling solutions are being offered that combine sensing and control of all circuits. Direct processor cooling is also being deployed. Within the data center pathways, optical fiber and copper pre-terminated cable assemblies provide a smaller diameter. These bundled cable assemblies require less packaging, involve fewer consumables, and thus achieve energy and waste savings throughout the manufacturing/distribution/installation chain. To minimize power consumption, the UPS array should be modular and scalable, and the use of a 3-phase AC power system at the highest possible distribution voltage (e.g., 208/120V, 380/220V, and 415/240V) is preferred. Many existing data centers were built without anticipating today's higher density rack systems marked by blade servers and densely packed virtualized environments, so the use of Energy Star servers is advisable. A full "wrap-around" external bypass for the power system is also recommended to meet both uptime requirements and future business goals.

In "Layer Zero: A New Perspective on the OSI Model," Greg Hinders of Legrand/Ortronics, Broomfield, Co., and Scott McKean of CommSol, Phoenix, underscored the importance of installing a cabling system that will optimize network performance for many years to come. They proposed the concept of a "Layer Zero," which promotes the use of advanced cable management to optimize cable routing at the rack, the use of switch port protection, and the use of bend limiting clips, etc. In addition, optimum placement of cabling at the equipment location can assist passive cooling at the racks, and metal basket tray can maximize airflow around the cable bundles. By consistently following best practices in the installation of the physical layer, minimum signal degradation can be achieved throughout the life of the facility. For example, compared to two-fiber jumpers at a patch panel, harness assemblies significantly reduce the bulk of cabling entering the electronic equipment. In addition, the harness assembly can be configured to match the profile of the equipment rack.

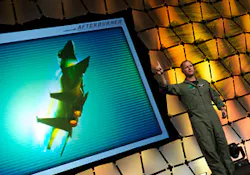

In "The Path to the ESS Credential,” Steve Surfaro of Axis Communications, Chelmford, Mass., explained that under BICSI's NxtGEN program, an RCDD designation is not a prerequisite for the Electronic Safety and Security (ESS) specialty. Therefore, installers and technicians have an opportunity to seek out this path. They also have the possibility of having their design experiences in the field satisfy the design requirement. Surfaro reviewed some of the material in the training manual, including: simplified security development theory; video content analysis; the definition of image quality, high-definition TV, hosted video, and virtualization; private and public Enterprise cloud deployment; and the role of Central Station Automation Platforms. In response to this ever-evolving industry, BICSI plans to introduce three new courses relating to ESS.

In "Optical Fiber Networks, Industry Trends, Application Influences, and New Options for Networks," Herbert Congdon of Tyco Electronics, Conover, N.C., explained the evolution of fiber optic structured cabling system architectures. Because optical fiber is capable of signal transmission well beyond the 100 m limitation of copper cabling defined in the 568 standard, in 1995, a TSB-7 Centralized Fiber Optic Guideline was issued and with the publication of the 568-B.1 standard in 2001, centralized cabling is supported. Centralized optical fiber cabling may be used as an alternative to the optical crossconnect to support centralized electronics deployment in a single-tenant building. The main cross-connect is located in the equipment room, and the telecom room serves as a passive optical interconnect location. Typically, two to four fiber cables go directly to outlets at the work area. The advantages of this arrangement include security, more efficient use of switch ports, and both the power and backup systems are centralized. Some of the disadvantages are: the cost is comparatively high (because media converters are used rather than network interface cards); moves, adds, and changes are more difficult; PoE is not supported; and a high concentration of cable resides in the equipment room. Another network architecture, Fiber to the Enclosure (FTTE), has a fiber backbone passing through the telecom room remote wall-mounted enclosures in the office spaces, where a conversion to a copper network takes place. The advantages of this arrangement include: quick deployment, as new technology is easily integrated; scalability with minimum cost and disruption; and simplicity in doing moves, adds, and changes. Some of the disadvantages include: lack of security for dispersed electronics; heat and noise near workstation; and limitation on number of users per enclosure.

In "Optical Connectivity Enables the Green Data Center," Doug Coleman of Corning Cable Systems, Hickory, N.C., described the advantages of using optical fiber in high concentrations, with OM-3 and OM-4 laser optimized 50/125-micron multimode fiber being the choice today. As data rates increase to 40 Gbps and beyond, the landscape favors an all-optical solution in the horizontal distribution area. And as port count increases, significant energy savings can be achieved by using optical fiber-based solution over a UTP-based solution. Several optical interconnection technologies, such as MTP/MPO-style trunking cable assemblies, duplex LC-connected optical fiber cables, and plug-and-play array modules, can help speed deployment and maintenance in a data center. Array optical fiber connectors are the newest recognized style of optical fiber interfaces for supporting high density application as well as the 40 GBase-SR4 and 100 GBase-SR10 that will need more than two optical fibers per link or channel.

About the Author

Joseph R. Knisley

Lighting Consultant

Joe earned a BA degree from Queens College and trained as an electronics technician in the U.S. Navy. He is a member of the IEEE Communications Society, Building Industry Consulting Service International (BICSI), and IESNA. Joe worked on the editorial staff of Electrical Wholesaling magazine before joining EC&M in 1969. He received the Jesse H. Neal Award for Editorial Excellence in 1966 and 1968. He currently serves as the group's resident expert on the topics of voice/video/data communications technology and lighting.